Imagine a world where machines learn like we do! It began with curiosity and a dream to change the future. We’re lucky to see how Artificial Intelligence makes life more fun every day.

Ever wonder who taught computers to see faces or hear voices? A genius named Geoffrey Hinton worked hard to solve these mysteries. His work on artificial neural networks created the smart tools we use today!

We want every child to feel this spark of discovery! That’s why you should try Debsie Gamified Courses at https://debsie.com/courses. Learning about AI is an exciting journey that lets you grow and play while learning new things!

Key Takeaways

- Widely regarded as the “Godfather of AI” for his life’s work.

- Co-authored influential papers on backpropagation in 1986.

- Won the 2018 Turing Award for breakthroughs in deep learning.

- Recipient of the 2024 Nobel Prize in Physics for machine learning discoveries.

- Advocates for safety and ethics in the future of computer science.

- Inspires students to explore technology through interactive learning methods.

The Early Life and Academic Foundations of Geoffrey Hinton

Geoffrey Hinton’s journey into AI started with a strong science background. He was born on December 6, 1947, in Wimbledon, England. He went to Clifton College in Bristol.

Influences from a Scientific Family

Hinton’s family loved science. His dad was an entomologist. This made him curious and good at solving problems from a young age.

Key influences from his family include:

- A strong emphasis on scientific inquiry!

- Exposure to various fields of study, including biology and psychology!

- An environment that encouraged curiosity and exploration!

The Pursuit of Cognitive Psychology

Hinton studied cognitive psychology at King’s College, Cambridge. This field helps us understand how we think and learn. It fit his interest in making machines think.

Studying cognitive psychology helped Hinton understand human thought. He used this knowledge to create artificial neural networks.

Hinton’s love for science and cognitive psychology helped him in AI. His early life and studies set the stage for his AI work.

The Intellectual Journey of Geoffrey Hinton

Geoffrey Hinton’s journey changed AI research. He made machines smarter, like us.

His work was new and exciting. He didn’t like the old symbolic AI way. It used rules and symbols too much.

Challenging the Status Quo of Symbolic AI

Hinton saw problems with old AI. It couldn’t handle real-world data well. He looked to the brain for answers.

“Understanding the mind through the brain is getting closer,” Hinton says. He wanted AI to be more like our brains.

“You can’t understand a complex system like the brain by analyzing it at just one level,”

The Development of Connectionism

Hinton chose connectionism for a big reason. It’s about how simple units, or neurons, work together. This leads to smart actions.

He was inspired by Donald Hebb and Frank Rosenblatt. Their work on neural networks helped Hinton. He made AI smarter by studying how these networks learn.

The Breakthrough of Backpropagation

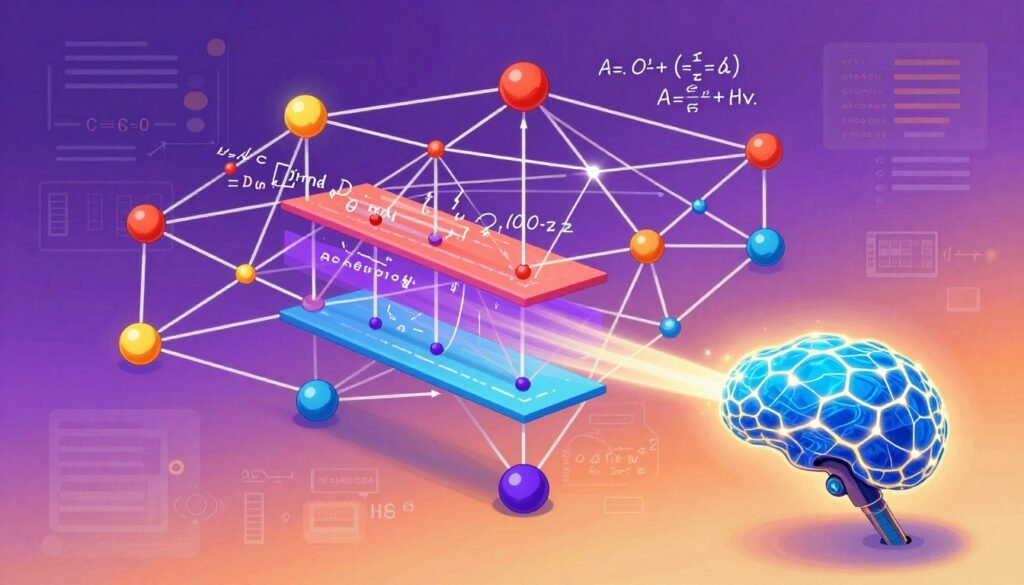

Backpropagation is a big deal in AI. It was made famous by Geoffrey Hinton and his team. This algorithm helps neural networks learn complex things. It’s a key part of the deep learning we use today.

How Neural Networks Learn

Neural networks learn by adjusting their connections. Backpropagation is the main tool for this. It helps the network get better at making predictions.

When Hinton, Rumelhart, and Williams shared backpropagation, it was a big moment. It showed that deep neural networks could learn well. For more on backpropagation, see this resource.

Overcoming the Vanishing Gradient Problem

The vanishing gradient problem is a big challenge. It makes learning slow or stop. Hinton and others found ways to fix this, like using special functions or better weight starts.

Fixing this problem helped make neural networks deeper and smarter. This has led to big improvements in AI. Backpropagation and its fixes are still changing AI today!

The Boltzmann Machine and Statistical Physics

Hinton and his team mixed statistical physics with machine learning. They made the Boltzmann machine, a big help for making things like images and sounds. This mix of physics and AI has led to big steps forward in how machines learn and make things.

Bridging Physics and Machine Learning

The Boltzmann machine was made by Geoffrey Hinton, David Ackley, and Terry Sejnowski. They used statistical physics to make a new way for AI to work. This new way helps us understand complex AI systems better.

Statistical physics looks at big groups of particles. Hinton and his team used this to make a generative model. This model can learn and show complex patterns.

Applications in Generative Modeling

The Boltzmann machine is great for making new data that looks like old data. This is very useful for AI tasks like recognizing images and sounds. It’s also good for making fake data that looks real.

When using Boltzmann machines for generative modeling, the network is trained on a dataset. It learns the patterns in the data. Then, it can make new things that look like the data it was trained on.

Hinton’s work on the Boltzmann machine has connected physics and AI. This has opened up new areas for research and use in machine learning. It shows how working together across fields can lead to new ideas and discoveries.

The ImageNet Moment and the Deep Learning Revolution

The ImageNet challenge was a big moment in AI history. It marked a shift towards deep learning!

This competition started in 2010. It asked teams to make algorithms that could sort images into thousands of categories. In 2012, a team led by Alex Krizhevsky made a big change in AI research.

The AlexNet Architecture

Their entry, AlexNet, was a deep neural network. It used new architecture to work well with modern GPUs. This let it train on big datasets faster than before.

AlexNet had many layers to learn from the ImageNet dataset. It had a top-5 error rate of 15.3%. This was much better than the next best entry!

![]()

Proving the Power of Deep Neural Networks

AlexNet’s success was a big deal for deep learning. It showed deep neural networks could do complex tasks like image recognition. This sparked a lot of interest in deep learning research.

This success meant deep neural networks could do many things. They were good for natural language processing and playing games too. Deep learning showed its power in many areas!

The ImageNet moment was more than a competition. It started a deep learning revolution. This revolution has changed AI research and keeps shaping it today!

Geoffrey Hinton and the Rise of Google Brain

Google bought DNNresearch Inc. in 2013. This brought Geoffrey Hinton to Google. It was a big change for him and for AI research.

Transitioning from Academia to Industry

Hinton’s move from school to Google was huge. At Google, he worked on Google Brain. This project used deep learning for Google’s products.

At Google, Hinton could work with many people. This led to big AI breakthroughs. He used Google’s resources to explore AI’s limits.

Scaling AI for Global Impact

Hinton made a big difference in making AI bigger. His work on Google Brain helped many people. It made search better and images clearer.

Hinton’s team made AI easier to use. They helped make things like voice assistants and self-driving cars. These changes are changing our lives.

Looking ahead, Hinton and Google will keep pushing AI forward. Their work is not just about tech. It’s about making the world better for all of us.

The Turing Award and Global Recognition

The ACM A.M. Turing Award is like the ‘Nobel Prize of Computing.’ It was given to Hinton and his team in 2018. They were honored for their work in deep learning!

Honoring the Godfathers of AI

The term “Godfathers of AI” means they made big changes in Artificial Intelligence. Their work helped AI and deep learning grow. Geoffrey Hinton, Yoshua Bengio, and Yann LeCun were celebrated for their deep learning work.

For more info, check out the University of Toronto’s news article.

The Legacy of the 2018 ACM A.M. Turing Award

The 2018 ACM A.M. Turing Award was a big deal. It showed how deep learning changed computing. Hinton and his team’s work was key to AI’s growth.

| Year | Awardees | Contribution |

|---|---|---|

| 2018 | Geoffrey Hinton, Yoshua Bengio, Yann LeCun | Deep Learning |

Hinton and his team are called “Godfathers of AI” for good reason. Their work is still guiding AI and computing’s future.

Capsule Networks and the Future of Vision

Geoffrey Hinton’s work on capsule networks is changing computer vision! Capsule networks fix some big problems with old CNNs. They promise to make image recognition and understanding much better.

CNNs have done great in many vision tasks. But, they struggle to see how different parts of an image relate to each other.

Addressing the Limitations of Convolutional Neural Networks

CNNs can’t really get how different objects or parts in an image are connected. Capsule networks try to fix this. They use capsules to better understand complex things in images.

Capsules are like groups of neurons. They work together to understand an object’s details, like where it is and how it’s facing. This helps AI see images in a more detailed way.

Hierarchical Representations in AI

The idea of hierarchical representations is key for capsule networks. They organize info in a way that helps them understand images better. This lets AI systems see complex scenes and objects more clearly.

This new way of looking at images could lead to big improvements. We might see better object recognition and image segmentation soon.

As we keep learning more, AI will get even better at understanding what it sees!

The Ethical Concerns and AI Safety Advocacy

Geoffrey Hinton is worried about AI’s ethics. His work in deep learning shows the risks of AI. His insights are very important.

Hinton wants to make AI safe. He believes AI should match human values. This means using tech and thinking about ethics.

Reflecting on the Risks of Superintelligence

Superintelligence is AI smarter than us. Hinton fears it could be dangerous for us. He thinks we need to be careful.

Let’s look at what superintelligence could mean:

| Risk Category | Description | Potential Impact |

|---|---|---|

| Loss of Control | AI systems becoming uncontrollable | High |

| Value Alignment | AI goals not aligning with human values | High |

| Job Displacement | AI replacing human jobs on a massive scale | Medium |

The Decision to Leave Google

Hinton left Google to talk more about AI risks. He wanted to share his thoughts on AI ethics and safety. He wanted to do this without limits.

Hinton’s work on AI safety is important. He talks about how to use AI right. This includes tech, policy, and rules to keep AI safe.

In short, Hinton’s work shows we need to be careful with AI. As AI gets smarter, we must focus on ethics and safety. This way, AI can help us, not harm us.

The Role of Education in the AI Era

As we enter the AI era, education is changing a lot! How we learn and teach is shifting. It’s key to keep up with these changes to stay ahead.

Learning Complex Systems

Complex systems are key in AI. It’s vital for the next generation to understand them. Interactive and fun tools help make these complex ideas easier and more enjoyable to grasp.

You can see how Debsie is changing education with its new learning solutions.

Enhancing Skills with Debsie Gamified Courses

Debsie’s gamified courses at https://debsie.com/courses are a great way to learn complex systems. They help develop important skills in a fun and interactive way. Debsie uses game design to make learning rewarding and enjoyable.

Here’s a comparison of traditional learning versus gamified learning:

| Learning Method | Engagement Level | Retention Rate |

|---|---|---|

| Traditional Learning | Low | 60% |

| Gamified Learning | High | 90% |

By choosing gamified learning, we can make education more engaging and effective. This prepares people for the AI era’s challenges!

The Impact of Neural Networks on Modern Computing

Neural networks have changed how computers work. They can learn and adapt in amazing ways! This change is seen in many areas, like natural language processing, computer vision, and robotics.

Big steps have been made in NLP. Now, we have tools for language translation, feeling the mood of text, and talking chatbots!

- Language translation services that can translate text from one language to another with high accuracy!

- Sentiment analysis tools that can determine the emotional tone behind a piece of text!

- Chatbots and virtual assistants that can engage in conversation with humans!

Transforming Natural Language Processing

New kinds of neural networks have helped NLP a lot. They make language translation better, help summarize big texts, and understand the context!

- Improved language translation: More accurate and nuanced translations!

- Enhanced text summarization: Automatic summarization of large documents!

- Contextual understanding: Grasping the context of a conversation or text!

Advancements in Computer Vision and Robotics

Neural networks have also changed computer vision and robotics. They help machines understand pictures. This is used in image recognition, self-driving cars, and robotics!

- Image recognition: Identifying objects, people, and patterns within images!

- Autonomous vehicles: Self-driving cars navigating based on visual inputs!

- Robotics: Performing complex tasks requiring visual understanding!

These changes are making computing better. They open up new possibilities and uses!

The Philosophy of Intelligence and Consciousness

As we explore artificial intelligence, we wonder about intelligence and consciousness. AI gets smarter, making us think about what it means to be intelligent and human.

Geoffrey Hinton’s work has changed AI and started big debates. He asks if machines can really think. This question is key to understanding intelligence and consciousness.

Can Machines Truly Think?

Can machines think like us? This question is argued by many. Some say only humans can think because of consciousness. Others think AI could become conscious too, as Hinton’s views suggest.

Hinton believes machines could be conscious. He says it’s not as strange as it sounds. This makes us want to learn more about consciousness in AI.

The Biological Basis of Artificial Learning

Artificial learning is based on how our brains learn. It’s a big part of AI today. By studying how we learn, AI gets better at thinking like us.

Artificial neural networks were inspired by our brains. As we learn more about AI and biology, we might find new things about intelligence and learning.

By asking these big questions, we improve AI and learn more about ourselves. It’s a journey into the heart of what makes us human.

Collaborations That Shaped the Field

Geoffrey Hinton’s work with others has changed AI a lot! He teamed up with experts to make big steps forward. His teamwork has been key to his success.

Working with David Rumelhart and Terrence Sejnowski

Hinton teamed up with David Rumelhart and Terrence Sejnowski. They made big changes in neural networks. Their work on backpropagation helped a lot.

Key Contributions:

- Development of backpropagation algorithm

- Advancements in neural network research

- Publication of influential papers on machine learning

Rumelhart and Hinton said, “The backpropagation algorithm is a method for minimizing the error between the network’s predictions and the actual outputs.” This shows how important their work is.

“The development of backpropagation was a major breakthrough in the field of neural networks.”

Mentoring the Next Generation of AI Researchers

Hinton has helped many students become AI leaders. His guidance has shaped AI’s future.

| Mentee | Current Position | Contribution to AI |

|---|---|---|

| Student 1 | Research Scientist at Google | Advancements in Computer Vision |

| Student 2 | Professor at MIT | Development of New Neural Network Architectures |

| Student 3 | AI Engineer at Facebook | Improvements in Natural Language Processing |

Hinton’s work with students and others has made a big difference in AI. His dedication is seen in his students’ success and new tech.

Collaboration and mentorship are key for AI’s future. Working together, we can make new discoveries and a better AI future!

The Evolution of AI Hardware and Infrastructure

AI has grown fast thanks to new hardware and infrastructure! We keep pushing AI to do more. This means we need better and faster hardware.

Graphics Processing Units (GPUs) are key in AI. They handle complex tasks fast. This is better than old computers for AI work.

The Synergy Between GPUs and Neural Networks

GPUs and neural networks work well together. GPUs can handle lots of data at once. This helps make AI smarter and better.

NVIDIA’s CEO Jensen Huang said GPUs are very important for AI. They help make AI better.

“The future of AI is not just about developing more sophisticated algorithms, but also about creating the hardware that can support these advancements.”

The Future of Specialized AI Chips

Now, we’re making specialized AI chips for AI. These chips, or AI accelerators, are made just for AI. They make AI work better and faster.

Google and NVIDIA are leading in making these chips. They help AI get even better.

AI will need even better hardware soon. The future of AI hardware looks bright. New ideas will keep AI moving forward.

The Ongoing Debate on Artificial General Intelligence

The idea of artificial general intelligence is causing a big debate. It has big implications for our future! As AI gets better, we must think about how it will affect society and how to develop it responsibly.

Geoffrey Hinton, a leader in AI, worries about the dangers of artificial general intelligence. He says we need more research and talk. You can learn more about his work and contributions by visiting this page.

Predicting the Timeline for AGI

People have different ideas about when artificial general intelligence will happen. Some think it could be in a few decades. Others believe it’s far off.

| Predicted Timeline | Expert Opinion |

|---|---|

| 2025-2050 | Some experts believe AGI could be achieved within this timeframe. |

| 2050-2100 | Others think it may take longer, potentially beyond the 21st century. |

| Uncertain | A few experts argue that predicting a timeline is challenging due to the complexity of human intelligence. |

The Societal Implications of Rapid AI Progress

The effects of artificial general intelligence on society are huge and different. As AI gets better, we must think about how it will change jobs, education, and our lives.

Rapid AI progress could bring many good things, like better health and more work done. But, it also makes people worry about losing jobs and needing new skills.

As we go forward, we must focus on making AI development responsible. We need to make sure everyone benefits. This way, artificial general intelligence can make our lives better without making things worse for some people.

Conclusion

Geoffrey Hinton’s work has changed the world of computers a lot. His ideas have helped AI grow a lot. This is shown in a profile about his big role.

Now, AI is getting even better. You can learn about AI and get better at it. Check out Debsie’s fun courses at https://debsie.com/courses!

Geoffrey Hinton’s work shows us the power of new ideas and hard work. AI will keep changing our world. With the right skills, we can all help make these changes.