Ever wonder how your brain remembers a favorite song or the smell of fresh cookies? It feels like pure magic, doesn’t it! We’re excited to share the story of a brilliant mind who turned that magic into real science.

This amazing journey led to the Nobel Prize in Physics in 2024. This discovery showed us how Artificial Neural Networks can store and recreate patterns like our minds do. It’s all about how things connect and grow together!

These big ideas are behind the cool robots and smart apps we use every day! You can start your own learning adventure now. Try Debsie Gamified Courses at https://debsie.com/courses. It’s a fun way to explore the future!

Let’s dive in and see how John Hopfield’s work changed our world forever. We’re here to help you play, learn, and discover new things about tomorrow’s technology. Are you ready to become a little explorer with us!

Key Takeaways

- John Hopfield won the 2024 Nobel Prize in Physics for his work.

- He created a way for computers to use associative memory like humans.

- His ideas helped build the foundation for modern machine learning.

- Artificial neural networks help computers recognize patterns and images.

- Debsie offers fun, gamified courses to help kids learn about AI.

- These scientific breakthroughs make our daily technology much smarter.

The Early Academic Journey of John Hopfield

Let’s explore John Hopfield’s early academic journey. We’ll see how his background in physics shaped his later research. His path started with a strong base in physics, which he later used in biological systems with great success!

Hopfield worked at top places like Princeton and Caltech. There, he showed his interdisciplinary approach to science. He mixed physics and biology to create models that explained complex biological things.

From Physics to Biological Systems

Hopfield moved from physics to biology for a reason. His early work in physics gave him a strong base. He then applied this to study biological systems, which was key to his later work on neural networks.

Using physics to study biology gave Hopfield a unique view. He used math from physics to understand complex biological behaviors. This led to new insights into how biological systems work.

The Interdisciplinary Approach to Scientific Discovery

Hopfield’s interdisciplinary approach is a big part of his career. He connected physics and biology, making big contributions to understanding complex systems. This way of working not only improved his research but also opened doors for new ideas in neural networks and artificial intelligence.

Learning from Hopfield’s journey shows us the value of exploring different fields. His work is a great example of how mixing disciplines can lead to amazing discoveries.

The Birth of the Hopfield Network

John Hopfield introduced the Hopfield network. This was a big step in neural networks, focusing on associative memory!

The Hopfield network is a special kind of neural network. It uses physics ideas, like energy landscapes, to save and remember patterns. This has helped machines to store and remember things well.

Defining Associative Memory in Neural Networks

Associative memory is key in neural networks. It lets machines remember patterns, even if they are a bit wrong. The Hopfield network is great at finding and remembering patterns.

To learn more about Hopfield networks, check out the Wikipedia page on Hopfield networks. It has lots of info on how they work and what they can do.

Energy Landscapes and Stability in Computation

The idea of energy landscapes is important for Hopfield networks. They work by finding the lowest energy state. This state is like a memory or pattern.

This way of working shows how well the network can remember things. It also shows its power in solving complex problems.

John Hopfield and the Principles of Neural Computation

John Hopfield changed how we see biology and machine learning. His work shows how living things process information. This has helped AI get better.

Hopfield’s work connects biology and machine learning. Neural computation is key. It helps us make AI that learns and adapts like living things.

Bridging the Gap Between Biology and Machine Learning

Hopfield’s research helps make machine learning better. By studying how neurons work, we can make AI smarter. This makes AI learn and adapt like us.

This connection between biology and AI is very important. It lets us make AI that learns from experience. This AI can handle new situations like humans do.

| Biological Process | Machine Learning Equivalent | Benefit |

|---|---|---|

| Neural Synapses | Artificial Neural Connections | Improved Learning Capabilities |

| Neural Plasticity | Adaptive Algorithms | Enhanced Flexibility |

| Pattern Recognition | Deep Learning Models | Better Accuracy |

The Concept of Emergent Properties in Artificial Systems

Hopfield also talked about emergent properties. These are complex behaviors from simple parts.

In AI, emergent properties lead to new and exciting things. Simple neural networks can create complex patterns. This lets AI do things we thought were impossible.

As AI gets smarter, understanding emergent properties is key. It lets us use neural computation fully. This way, AI can think and learn like us.

The Mathematical Foundations of Modern AI

Modern AI owes a lot to John Hopfield’s work. His ideas have greatly shaped the field. You’ll see how his work has influenced AI.

Let’s look at how Hopfield networks have helped deep learning. We’ll also see why optimization is key in training neural models.

Hopfield Networks and Deep Learning

Hopfield networks were created by John Hopfield. They are a key part of deep learning. These networks can remember and recall patterns.

Key Features of Hopfield Networks:

- Associative memory capabilities

- Recurrent neural network architecture

- Energy-based models for pattern recognition

Hopfield said, “The collective behavior of a system of simple neurons can be understood by analyzing the energy landscape of the network.” This idea helps us solve complex problems.

“The collective behavior of a system of simple neurons can be understood by analyzing the energy landscape of the network.” – John Hopfield

Optimization Techniques in Neural Models

Optimization is crucial for training neural models. It uses ideas from physics to lower the network’s energy. This makes the network work better.

| Optimization Technique | Description | Application in AI |

|---|---|---|

| Gradient Descent | A first-order optimization algorithm used to minimize the loss function. | Training neural networks |

| Simulated Annealing | A global optimization technique inspired by the annealing process in metallurgy. | Optimizing complex systems |

| Stochastic Gradient Descent | A variant of gradient descent that uses a single example at a time. | Large-scale machine learning |

Using these optimization techniques improves neural model performance. It’s all about making the models better.

In conclusion, John Hopfield’s work has greatly influenced AI. Understanding his ideas helps us appreciate deep learning and optimization.

Key Contributions to Theoretical Physics

John Hopfield changed the game in theoretical physics. He used statistical mechanics in neural networks. This work deepened our understanding of complex systems and helped AI grow.

His research on neural networks was a big deal. Statistical mechanics helps us understand big systems. Hopfield used it to make neural networks easier to study.

Applying Statistical Mechanics to Neural Networks

Using statistical mechanics in neural networks is key. Energy landscapes and stability are important. Hopfield showed how neural networks work like physics.

This idea helped make neural networks better. It led to more efficient and robust algorithms. Now, we can tackle complex tasks with ease.

The Impact of Spin Glass Models on Information Theory

Spin glass models also played a big role. They help us understand neural networks. This gives us insights into how they store and get information.

These models also helped information theory grow. They show us what neural networks can do. This knowledge helps us make better AI.

In short, John Hopfield’s work in theoretical physics was huge. His use of statistical mechanics and spin glass models changed AI. His work keeps helping us understand complex systems and improve AI.

The Legacy of John Hopfield in Contemporary Research

John Hopfield’s work is still changing research today! His ideas have led to big steps in AI and neural networks.

His work is still important today. The ideas he started are key to many new uses.

Why His Work Remains Relevant in 2024

Hopfield’s research is still important in 2024. His work on associative memory and neural networks has helped many fields. This includes computer science and neuroscience.

His work is great for pattern recognition and solving problems. These are big areas in AI today.

Current Applications in Pattern Recognition and Optimization

Hopfield’s work is seen in many new uses. For example, his ideas helped make image and speech recognition better.

| Application Area | Description | Impact |

|---|---|---|

| Pattern Recognition | Uses Hopfield Networks to find patterns in data. | Makes image and speech recognition more accurate. |

| Optimization Problems | Uses Hopfield’s ideas to solve hard problems. | Makes things more efficient in many areas. |

| Neural Networks | Influences new neural network designs. | Helps deep learning and AI grow. |

Looking ahead, Hopfield’s work will keep being key. We’re excited to see what’s next!

Learning Through Gamification and Modern Education

Discover how gamification changes learning AI and machine learning with Debsie’s new way! At Debsie, we love making learning fun and exciting. Our games make learning AI and machine learning easy and fun.

Enhancing Technical Skills with Debsie Gamified Courses

Debsie’s games offer a special learning experience. They mix fun challenges with real-life uses. This makes learning AI and machine learning fun and interactive.

Our games fit different learning ways. So, every student can learn and remember well.

Here’s a quick look at our games:

| Course Features | Benefits |

|---|---|

| Interactive Challenges | Hands-on experience with real-world problems |

| Gamified Learning Paths | Engaging and fun way to learn complex concepts |

| Personalized Feedback | Immediate insights into your learning progress |

Why Interactive Learning Matters for AI Enthusiasts

Interactive learning is key for AI fans. It lets them get hands-on and really understand tough ideas. By playing with interactive stuff, learners can try out different things and see how they work.

As

“The future of AI depends on our ability to make it accessible and understandable to everyone.”

At Debsie, we want AI education to be open and fun for everyone. Our tools help make AI easy for all, no matter their age or background.

Explore the curriculum at https://debsie.com/courses

Join us today and start learning AI and machine learning with Debsie’s games! We can’t wait to see you grow and learn with us.

The Evolution of Associative Memory Models

Associative memory models have grown a lot! They started simple and now are deep learning wonders. This shows how fast AI and neural networks are improving.

Knowing their history helps us get how complex today’s AI is. Let’s look at the big steps in their growth.

From Simple Networks to Complex Deep Learning

It all started with basic neural networks. They could remember and recall patterns. Then, these models got more complex, handling bigger data and harder tasks.

Key advancements include:

- New learning algorithms

- Better pattern recognition

- Deep learning frameworks

These changes made associative memory models very useful for AI.

| Feature | Early Models | Modern Models |

|---|---|---|

| Architecture | Simple Neural Networks | Deep Learning Architectures |

| Learning Algorithms | Basic Hebbian Learning | Advanced Optimization Techniques |

| Capacity | Limited Storage | High Storage Capacity |

Addressing Limitations in Early Neural Architectures

Early models had limits like small storage and not being very strong. To fix this, new, smarter models were made. These could learn from more data and change with new info.

“The development of more advanced neural architectures has been crucial in overcoming the limitations of early associative memory models, enabling more efficient and effective AI systems.”

Deep learning came along and made things better. It added more complexity and flexibility. Now, these models help in many areas, like seeing pictures and understanding words.

As AI keeps getting better, so will associative memory models. Knowing how they’ve grown helps us see how AI works today. It also shows us where we can make AI even better in the future.

John Hopfield and the Nobel Prize Recognition

John Hopfield’s Nobel Prize win is a big deal. It shows how important his work in AI is. We’re going to explore his achievement and its big impact worldwide!

Hopfield changed AI with his work on artificial neural networks. His Nobel Prize is a big win for his hard work. Princeton University says his research has led to big AI advances.

Significance of Physics-Based AI Research

Using physics in AI research has opened new doors. Hopfield showed how physics ideas can make neural networks better.

Physics-based AI research is key. It adds a strong, math-based way to make AI better. This helps solve hard problems that old AI methods can’t.

Global Impact of His Scientific Breakthroughs

Hopfield’s work has made a big difference globally. His Hopfield Network has inspired many. It has helped shape today’s AI.

His research has helped in many areas, like recognizing patterns and solving problems. His work keeps pushing AI forward with new discoveries.

Collaborations That Changed the Field

John Hopfield worked with top minds in neuroscience and computer science. This teamwork helped AI grow a lot. Together, they came up with new ideas that changed AI forever.

Working with Pioneers

Hopfield teamed up with leaders in his field. Collaborations with experts from other areas led to new ideas. These ideas might not have come up alone.

His work on the Hopfield Network mixed biology and computer science. This mix helped solve tough AI problems.

The Power of Cross-Pollination

Sharing ideas between fields sparked AI’s growth. By mixing knowledge, researchers made AI smarter and better. This teamwork is key to AI’s success.

| Field | Contribution | Impact on AI |

|---|---|---|

| Neuroscience | Understanding of biological neural networks | Inspired development of artificial neural networks |

| Computer Science | Advances in computational power and algorithms | Enabled efficient training of complex AI models |

| Physics | Application of statistical mechanics to neural networks | Improved understanding of network dynamics and stability |

As we explore AI’s future, teamwork will be more important. Collaborations and cross-pollination will keep AI growing. By working together, we can find new ways to improve AI.

The Philosophical Implications of Artificial Intelligence

Artificial Intelligence is growing fast. This makes us think about what it can and can’t do. We wonder if machines can think and learn like we do.

Can Machines Truly Think Like Biological Brains?

One big question is if machines can think like our brains. This question is about what makes us smart and aware. AI can learn a lot and do complex things, but can it really think like us?

AI has made big steps forward, like in neural networks and deep learning. But, do these machines really think like us? Philosophers and AI experts still debate this.

Reflecting on the Future of Human-Machine Interaction

AI is becoming part of our daily lives. We need to think about how we will interact with it. We should make AI systems that are good, fair, and clear.

The table below shows important points about how we interact with machines and what it means for the future:

| Aspect | Current State | Future Implications |

|---|---|---|

| AI Decision-Making | Primarily based on algorithms and data | Potential for more autonomous decision-making |

| Human-AI Collaboration | Increasingly used in various industries | More sophisticated collaboration tools and methods |

| Ethical Considerations | Growing concern about bias and fairness | Development of more robust ethical frameworks |

Understanding AI’s big ideas helps us deal with its good and bad sides. As we go on, talking about AI’s role in our world is key. We must make sure AI grows in a way that respects human values.

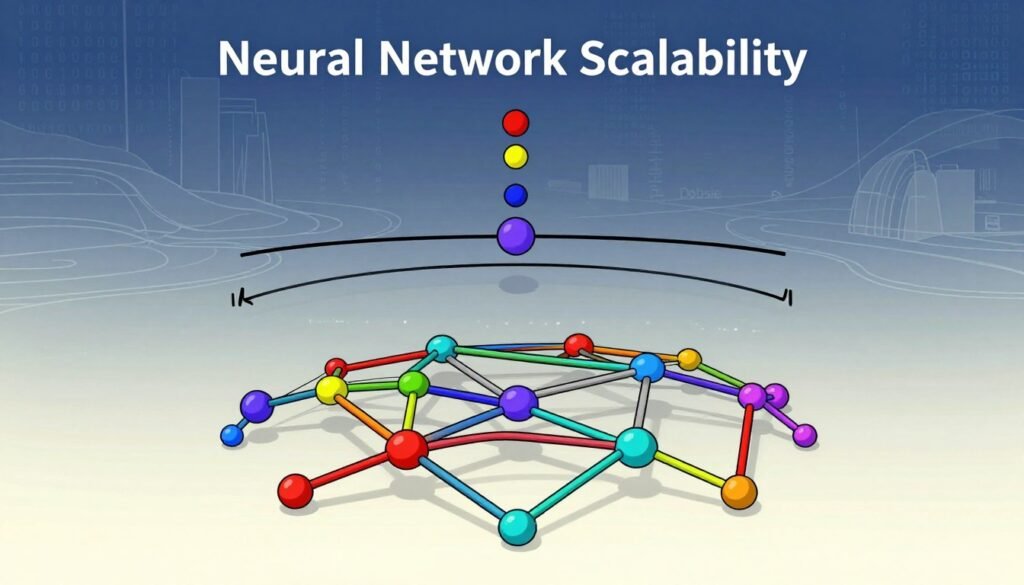

Challenges and Criticisms in Neural Network Theory

Scalability is a big problem in neural network theory. It makes people question the future of these models. We need to find ways to overcome these challenges.

One big worry is scalability. As neural networks get bigger, they need more power and data. This can be expensive and bad for the planet. We must find ways to make training cheaper and greener.

Navigating the Hurdles of Scalability

To solve the scalability problem, we need new ideas. Researchers are looking at special kinds of neural networks that use less power. They’re also using new computer chips to make things faster.

| Scalability Challenge | Potential Solution | Benefits |

|---|---|---|

| Computational Intensity | Efficient Neural Network Architectures | Reduced Training Time |

| Data Requirements | Data Augmentation Techniques | Improved Model Generalization |

| Environmental Impact | Green AI Practices | Sustainable AI Development |

Debating the Future of Connectionist Models

There’s a big debate about the future of connectionist models. Some think they’ll keep leading in AI. Others believe new ways might be better.

As we look ahead, we must think about our choices. Should we keep improving connectionist models or try something new? We need to understand what works and be open to new ideas.

The Role of Mentorship in Scientific Advancement

Mentorship is key in making many scientific discoveries. People like John Hopfield show its power. It helps shape careers and moves science forward.

Inspiring the Next Generation of AI Researchers

Mentorship does more than guide. It inspires and grows the next AI researchers. John Hopfield’s career shows how mentorship helps in AI.

For example, Geoffrey Hinton got a Nobel Prize in Physics. This shows how mentorship and hard work matter.

| Aspect of Career | Impact of Mentorship |

|---|---|

| Research Direction | Guides the focus and scope of research projects |

| Skill Development | Enhances technical skills and knowledge |

| Networking Opportunities | Introduces researchers to key figures and collaborations |

The Importance of Academic Rigor in Tech Development

Academic rigor is vital for tech growth. It makes sure progress is based on solid knowledge. John Hopfield’s work in neural networks is a great example.

Mentorship and academic rigor build a strong community. This community values knowledge and teamwork. It drives science forward and prepares future researchers for big challenges.

Future Directions for Neural Network Research

We’re entering an exciting era for neural networks. The boundaries of current knowledge are being pushed! There are many ways to advance neural network research. This includes using new architectures and computational techniques.

Beyond the Hopfield Network: What Lies Ahead?

The Hopfield Network has been key in neural network development. New architectures are being explored to improve it. Researchers are working on more complex and dynamic models. These models can learn from different data sets.

Integrating Quantum Computing with Neural Architectures

The mix of Quantum Computing and neural networks is exciting. This could change the field by making computations faster and more complex. Researchers are looking into how quantum principles can boost neural network training and efficiency.

| Research Area | Potential Impact | Current Status |

|---|---|---|

| Quantum Neural Networks | Revolutionize computation speed and complexity | Experimental |

| Advanced Associative Memory Models | Improve pattern recognition and recall | Developing |

| Neuromorphic Computing | Enhance AI hardware efficiency | Emerging |

Conclusion

John Hopfield’s work in AI has made a big impact. His Hopfield network helped shape today’s machine learning. His ideas still inspire new AI advancements.

His research did more than just introduce new ideas. It opened doors for future neural network and deep learning breakthroughs. For more on AI, check out the Nobel Prize website.

If you want to learn more about AI, Debsie has fun courses. They make learning AI and machine learning fun. Visit Debsie’s website to start learning today!